CeSIA, represented at the AI Impact Summit 2026 in Delhi, India, by Charbel-Raphaël Segerie and Pauline Charazac, took an active part in discussions on AI safety and governance aimed at reducing systemic risks. We engaged with policymakers, researchers, civil society actors, and industry representatives to address some of the most pressing challenges.

On 18 February 2026, CeSIA co-organised a workshop with The Future Society as part of the AI Safety Connect (AISC) day, focused on defining and governing unacceptable AI risks. National and regional representatives from Denmark, Brazil, Japan, Singapore, Canada, and the European Union, alongside multilateral officials and civil society, examined how to align approaches to AI risks that threaten security, fundamental rights, democratic processes, or international stability. The session built on the momentum of the Global Call for AI Red Lines. It first sought to map the areas where unacceptable risks are already subject to concrete regulation, then explored how the convergences taking shape could feed into interoperable or even global governance frameworks.

While many jurisdictions are beginning to specify prohibited AI practices, international coordination remains fragmented. Definitions, regulatory mechanisms, and enforcement modalities vary considerably across countries, and there is little consensus on cross-border implementation.

The main takeaways from this workshop are as follows:

- National prohibitions: several countries are independently developing bans targeting specific AI practices.

- Prioritisation challenges: AI-related harms are already materialising, but no consensus has emerged on how to prioritise current risks relative to future threats.

- Coalition-based coordination: global harmonisation appears unlikely; progress will more plausibly come through coalitions and sector-by-sector convergence.

- Enforcement gaps: technical standardisation and implementation difficulties hinder effective enforcement.

- Data shortfalls: incident reporting stands out as a promising avenue for addressing this deficit.

CeSIA also contributed to several high-profile sessions:

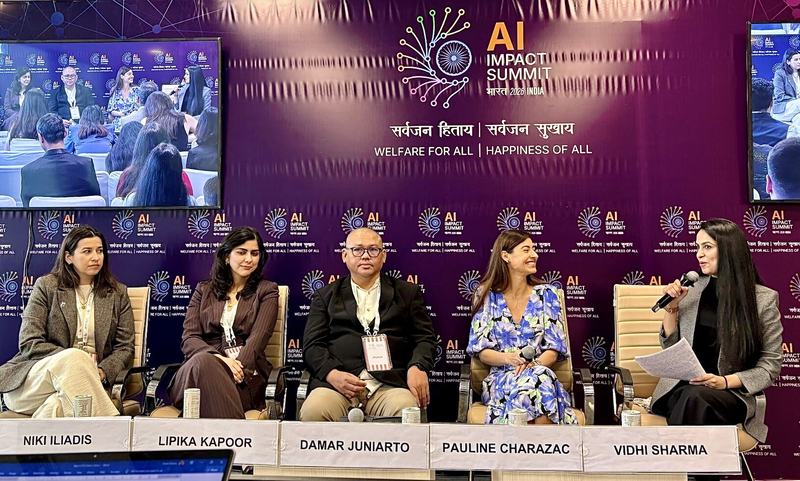

- the Fairtech panel at the AI Impact Summit, organised by The Future Shift Lab;

- the Oxford China Policy Lab workshop on AI sovereignty and its implications for global governance;

- the Women in Safety and Ethics (WISE) workshop held at GIZ, at which the Global Call for AI Red Lines was presented;

- and the WISE panel on AI governance, where CeSIA joined the Vedica Scholars Programme for Women and WISE to discuss policy, standards, and international frameworks.

As the AI Impact Summit underscored the urgency of a shared, operational governance framework, CeSIA remains fully committed to advancing these discussions and drawing red lines around unacceptable AI uses and risks. The 2027 AI Summit, to be held in Geneva, will be a pivotal moment for translating the emerging scientific consensus into effective global governance.